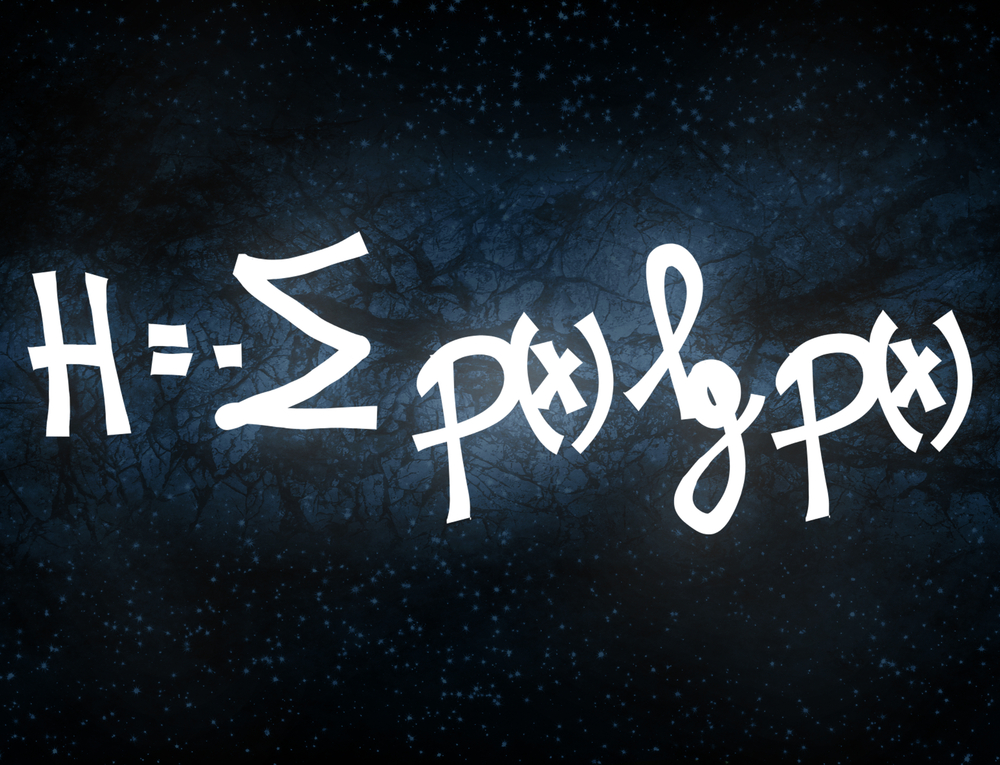

This is a graduate-level introduction to mathematics of information theory. The aim of this course is to cover both classical and modern topics. In addition to that, it includes information entropy, lossless data compression, binary hypothesis testing, channel coding, and lossy data compression. If you are looking for knowledge about Information theory, then this course is the right course for you.

Assessment

This course does not involve any written exams. Students need to answer 5 assignment questions to complete the course, the answers will be in the form of written work in pdf or word. Students can write the answers in their own time. Each answer needs to be 200 words (1 Page). Once the answers are submitted, the tutor will check and assess the work.

Certification

Edukite courses are free to study. To successfully complete a course you must submit all the assignment of the course as part of the assessment. Upon successful completion of a course, you can choose to make your achievement formal by obtaining your Certificate at a cost of £49.

Having an Official Edukite Certification is a great way to celebrate and share your success. You can:

- Add the certificate to your CV or resume and brighten up your career

- Show it to prove your success

Course Credit: MIT

Course Curriculum

| Information Measures | |||

| Information measures Entropy and divergence | 00:15:00 | ||

| Information measures Mutual information | 00:10:00 | ||

| Sufficient statistic. Continuity of divergence and mutual information | 00:00:00 | ||

| Extremization of mutual information Capacity saddle point | 00:10:00 | ||

| Single-letterization. Probability of error. Entropy rate | 00:10:00 | ||

| Lossless Data Compression | |||

| Variable-length Lossless Compression | 00:15:00 | ||

| Fixed-length (almost lossless) compression. Slepian-Wolf problem | 00:10:00 | ||

| Compressing stationary ergodic sources | 00:10:00 | ||

| Universal compression | 00:10:00 | ||

| Binary Hypothesis Testing | |||

| Binary hypothesis testing | 00:10:00 | ||

| Hypothesis testing asymptotics I | 00:10:00 | ||

| Information projection and Large deviation | 00:10:00 | ||

| Hypothesis testing asymptotics II | 00:10:00 | ||

| Channel Coding | |||

| Channel coding | 00:10:00 | ||

| Channel coding Achievability bounds | 00:10:00 | ||

| Linear codes. Channel capacity | 00:10:00 | ||

| Channels with input constraints. Gaussian channels | 00:10:00 | ||

| Lattice codes (by O. Ordentlich) | 00:10:00 | ||

| Channel coding Energy-per-bit, continuous-time channels | 00:15:00 | ||

| Advanced channel coding. Source-Channel separation | 00:10:00 | ||

| Channel coding with feedback | 00:15:00 | ||

| Capacity-achieving codes via Forney concatenation | 00:10:00 | ||

| Lossy Data Compression | |||

| Rate-distortion theory | 00:10:00 | ||

| Rate distortion Achievability bounds | 00:10:00 | ||

| Evaluating R(D). Lossy Source-Channel separation | 00:10:00 | ||

| Advanced Topics | |||

| Multiple-access channel | 00:10:00 | ||

| Examples of MACs. Maximal Pe and zero-error capacity | 00:10:00 | ||

| Random number generators | 00:10:00 | ||

| Assessment | |||

| Submit Your Assignment | 00:00:00 | ||

| Certification | 00:00:00 | ||

Course Reviews

No Reviews found for this course.